Gemini 3.1 Pro API Pricing: $1/M Input, $6/M Output – Low-Cost Grsai Access Guide for Developers

Have you used Gemini 3.1 Pro yet? Google says its reasoning ability has more than doubled compared to Gemini 3 Pro. Is it really that impressive? This article will comprehensively introduce the core capabilities of Gemini 3.1 Pro, how developers can call the Gemini 3.1 Pro API, and how ordinary users can quickly use it.

I. Gemini 3.1 Pro Builds an All-Round Reasoning Brain What exactly does

ARC-AGI-2 test, and what does 77% mean?

Gemini 3.1 Pro achieved a score of 77.1% on the extremely challenging ARC-AGI-2 benchmark test, with reasoning performance more than doubled compared to the previous generation. Three months ago, Gemini 3 Pro scored 31.1%, which wasn’t bad at the time. But this time, 3.1 Pro directly reached 77.1%. In comparison, OpenAI’s o1 and GPT-5.2, which also focus on reasoning, only scored 52.9% on this test.

This benchmark test does not assess knowledge reserves but reasoning ability. What makes this test special is: it only gives you a few grid patterns as ‘examples,’ and you need to deduce the underlying transformation rules yourself, then apply them to entirely new patterns.

For humans, this kind of reasoning can be completed almost intuitively, while AI cannot rely on rote memorization or statistical guessing — it must truly understand the logic. Because it gets stuck in areas where ‘humans excel and AI struggles,’ ARC-AGI has always been regarded as an important measure of progress toward general artificial intelligence (AGI).

The number 77.1% behind it shows that Google has found an effective path to scale reasoning capabilities. While other models are still relying on piling up parameters, Gemini 3.1 Pro is far ahead in ‘understanding logic and deducing rules.’

How does the three-level thinking mode save money?

Previous Gemini models had only two tiers — fast response (Fast) and deep reasoning (Deep Think). Either instant replies or long thinking chains that take forever to output the desired content.

Gemini 3.1 Pro adds an intermediate tier, upgrading to a three-level thinking system. Developers can flexibly switch between low, medium, and high via the ‘thinking_level’ parameter. Each tier has an internal ‘thinking Token’ budget, which directly determines how much effort the model spends on internal reasoning. For simple API calls, use the low tier to save latency and cost; for complex debugging, switch to the high tier.

Low: Fast response mode, with the lowest thinking Token budget. The model responds quickly based on intuition, suitable for daily simple tasks. It gives the most direct answers based on intuition and training data, with the shortest thinking process. Compared to the previous generation’s Fast mode, response speed is faster and costs are lower — for simple tasks, it can save 60%-80% of reasoning costs compared to using the high tier.

Medium: Balanced reasoning mode, with moderate thinking Token budget, performing medium-depth logical analysis. The reasoning quality is equivalent to the previous generation model (Gemini 3 Pro)’s ‘high’ tier, but the cost is only about 40% of that. This means you can achieve the same complexity as before with much less money.

High: Deep reasoning mode (Deep Think Mini), with the highest thinking Token budget, suitable for professional complex tasks. The model activates a powerful reasoning engine similar to ‘Deep Think,’ performing extremely in-depth multi-step thinking to achieve the best results. Although the cost is higher than the low and medium tiers, compared to the previous generation’s Deep Think mode, the cost for the same depth is reduced by about 30% — because the architecture is optimized, the money is spent more efficiently.

Native multimodal support, comprehensive expansion of perception

dimensions No preprocessing required, native understanding: The model natively supports text, images, audio, video, PDF, and code — no need for OCR or preprocessing tools. Upload a research paper with charts, and the model can read the text and understand the charts simultaneously; input code, and it can analyze logic and comments at the same time.

Seamless cross-modal information fusion: True multimodality means establishing connections between different modalities. Gemini 3.1 Pro can perform cross-reasoning on video frames, audio narration, and text subtitles, building understanding of complex tasks on richer perception dimensions.

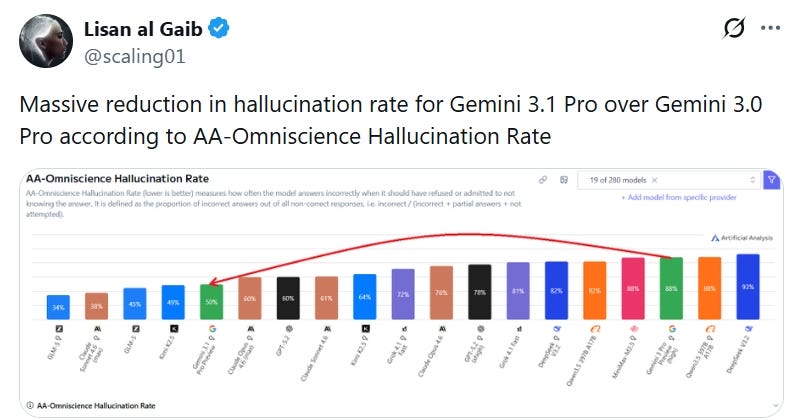

Lower hallucination rate, knowing what it doesn’t know

AA-Omniscience Index leaps forward: This index measures the model’s ‘self-awareness,’ i.e., its cognition of its own knowledge boundaries (anti-hallucination). Gemini 3.1 Pro jumped from the previous generation’s 13 points to 30 points, ranking first among mainstream models — this means it refuses to answer unfamiliar questions more accurately, and the probability of giving incorrect information in areas it is good at is lower, reducing the chance of nonsense.

Significance for professional reasoning: In rigorous scenarios like code debugging and academic research, ‘I don’t know’ is safer than ‘guessing wildly.’ This capability comes from the model’s clear modeling of knowledge boundaries. In HIGH tier, the model spends more time on internal verification, further reducing hallucination risks in critical tasks.

II. Real Performance of Gemini 3.1 Pro:

Code Generation for SVG Animations

With just a short text description, Gemini 3.1 Pro can generate CSS/JavaScript/SVG code-form animated SVGs for websites based on text instructions. Animations that previously needed to be presented as videos or GIFs can now be generated directly as code with 3.1 Pro. Developers can easily adjust details or apply them directly. These vector animations remain sharp even when infinitely scaled, with extremely small file sizes — far lighter than traditional videos or GIFs, perfect for web embedding.

Integrating Complex Systems

Gemini 3.1 Pro acts like an all-round ‘translator + technical’ intermediary. You just say ‘make a real-time space station dashboard,’ and it can automatically understand the requirements, proactively connect to those obscure aerospace data APIs, and instantly convert complex professional code into an intuitive interface with power meters and 3D models. The entire process requires no coding — it handles everything from technical to design aspects itself.

Interaction Design

Gemini 3.1 Pro can generate complex 3D starling flock dance animations to create immersive experiences. It simulates starling flock flight based on 3D algorithms, allowing users to control the flock’s flight direction and trajectory in real time through hand-tracking technology. When the flock moves, the system also synchronously generates dynamic background music, fusing vision and hearing, providing efficient prototype validation for interaction design and creative programming.

Creative Programming

Gemini 3.1 Pro can directly transform literary artistic conception into runnable web code. For example, ask it to design a modern-style personal homepage for ‘Wuthering Heights.’ The model doesn’t just retell the plot but deeply captures the oppressive, wild, and profound emotional tone of the original work, perfectly presenting the soul of the protagonists. This is not just code generation — it’s AI truly understanding and interpreting the literary core.

III. How to Use Gemini 3.1 Pro, Low-Cost Official API

Calls Developers can use Google AI Studio to build with the preview version, while ordinary users can use the Gemini APP or official website: https://gemini.google.com/ But currently, priority is given to Pro and Ultra users. Is there any other way to experience it for free? Of course — keep reading!

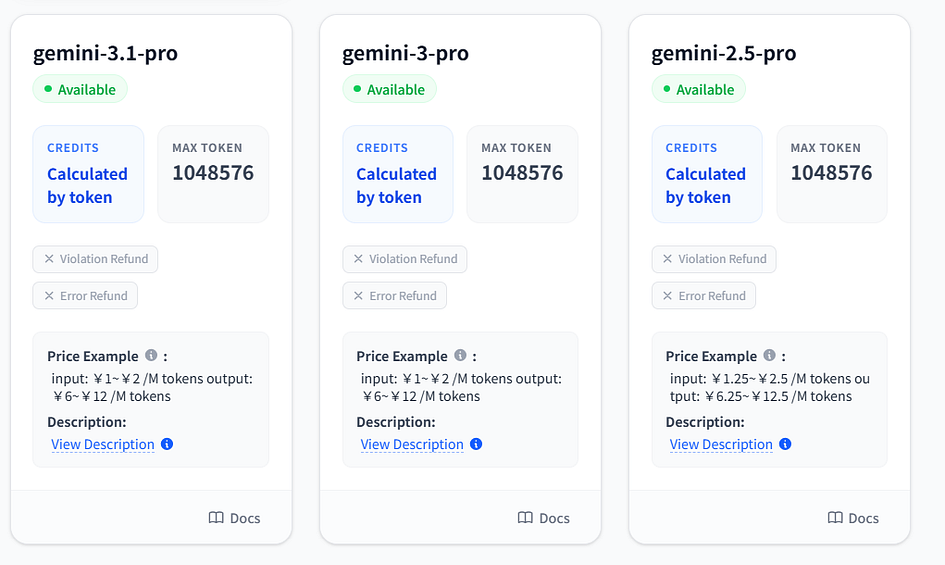

Gemini Official Source API — Grsai Although the API pricing for Gemini 3.1 Pro remains the same as the previous generation 3.0 Pro (input $2/M tokens, output $12/M tokens), this price is still not low for individual users and enterprises. Third-party APIs on the market often have inflated prices with middlemen taking cuts. In fact, you can directly call Gemini’s source API — GrsAi (https://grsai.com, which allows calling Gemini-3.1-Pro, Nano Banana Pro (image generation), and other models at lower prices than official.

Image generation models:

- Nano Banana 2 — $0.065/image

- Nano Banana Pro — $0.09/image

- Gpt-image-1.5 — $0.02/image

- Nano Banana — $0.022/image

Chat models:

- Gemini-3.1-Pro: input $1~$2 /M tokens, output $6~$12 /M tokens

- Gemini-3-Pro: input $1~$2 /M tokens, output $6~$12 /M tokens

- Gemini-2.5-Pro: input $1.25~$2.25 /M tokens, output $6.25~$12.5 /M tokens

Video models:

- Sora2 — $0.08/clip

- Veo3.1 — $0.4/clip

For more model versions, check the model list: https://grsai.com/dashboard/models

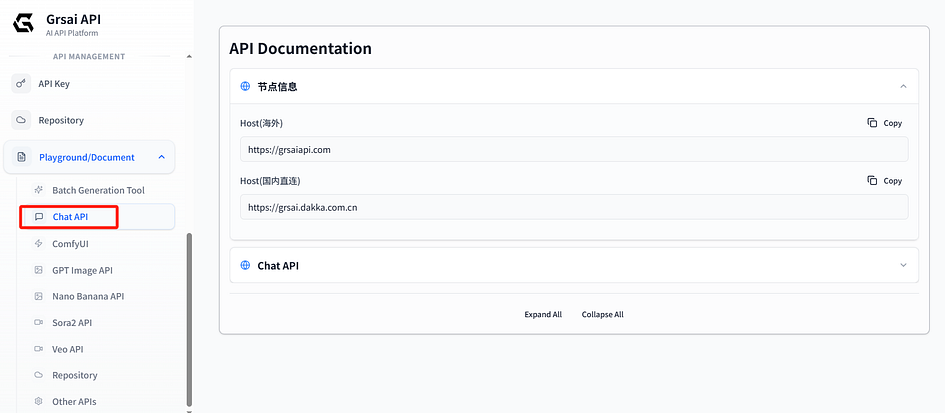

IV. Development Access to Gemini 3.1 Pro API Tutorial API Node

Information Grsai provides two access addresses (base url), choose based on your network environment:

- Overseas: https://grsaiapi.com

- Domestic direct connection: https://grsai.dakka.com.cn

- Host+ interface: https://grsai.dakka.com.cn/v1/draw/nano-banana

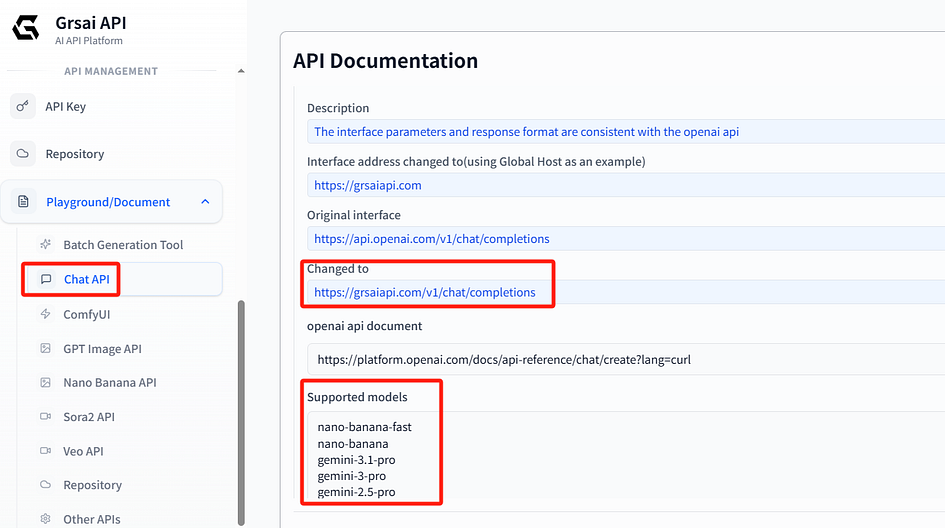

Chat API Call Instructions

Grsai’s Chat API is fully compatible with OpenAI and Gemini formats. For OpenAI, just replace the base address; for Gemini, replace the base address and model name to call.

Method 1: Call via Grsai (OpenAI compatible format)

- Original OpenAI interface: https://api.openai.com/v1/chat/completions

- Replaced interface address: https://grsai.dakka.com.cn/v1/chat/completions (using domestic direct connection as example)

- OpenAI API documentation: https://platform.openai.com/docs/api-reference/chat/create?lang=curl

import openai

# Configure Grsai address and key

client = openai.OpenAI(

api_key="your APIKey",

base_url="https://grsai.dakka.com.cn/v1" # Domestic direct connection address

)

# Call Gemini-3.1-Pro

response = client.chat.completions.create(

model="gemini-3.1-pro",

messages=[

{"role": "system", "content": "You are an aerospace data expert"},

{"role": "user", "content": "Analyze the International Space Station's orbital data"}

],

stream=False

)

print(response.choices[0].message.content)Method 2: Call via Google official SDK

- Original Gemini interface: https://generativelanguage.googleapis.com/v1beta/models/gemini-3.1-pro-preview:generateContent

- Replaced interface address: https://grsai.dakka.com.cn/v1beta/models/gemini-3.1-pro:generateContent (model name must match Grsai backend)

- Gemini API official documentation: https://ai.google.dev/gemini-api/docs

import requests

import json

# Grsai domestic direct connection node + Gemini native interface path

url = "https://grsai.dakka.com.cn/v1beta/models/gemini-3.1-pro:generateContent"

# Your Grsai API Key

api_key = "your Grsai-APIKey"

# Request headers

headers = {

"Content-Type": "application/json"

}

# Request body (Gemini native format)

data = {

"contents": [

{

"parts": [

{

"text": "Explain what a vector graphic is"

}

]

}

]

}

# Send request (API Key passed via URL parameter, Gemini style)

response = requests.post(

f"{url}?key={api_key}",

headers=headers,

json=data

)

print(response.json())V. Ordinary User Tutorial for Using Gemini 3.1 Pro

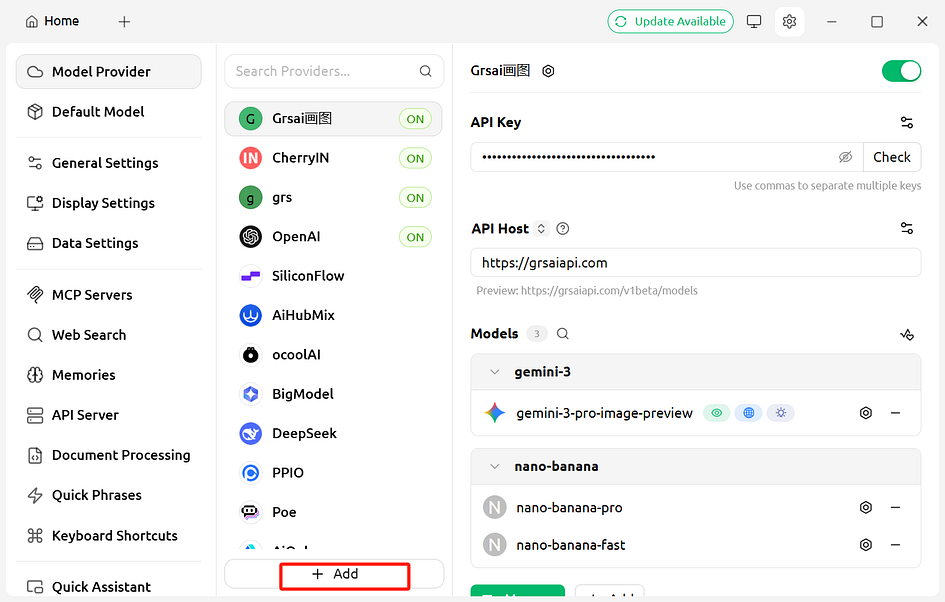

Ordinary users can download tools like Cherry Studio, Chatbox, etc., to integrate various mainstream large models into one clean interface. You just need to configure the API simply to freely call different model services, without frequently switching websites or apps.

The configuration methods for these tools are basically similar — follow my steps to operate step by step, and you can use Gemini 3.1 Pro in 3–5 minutes. Most important reminder: The model names must be copied and pasted directly from Grsai’s official developer documentation (Chat API section) — do not type them manually! Even an extra space or wrong case will cause connection failure.

1.Enter the settings page:

Download and install: Cherry Studio. After launching Cherry Studio, click the ‘Settings’ icon in the upper right corner (usually a gear shape).

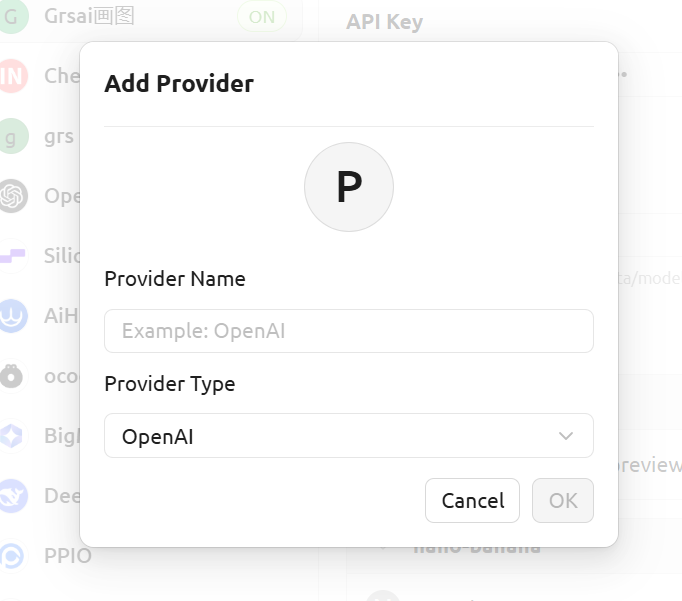

2.Add model service: In the settings menu, find and click ‘Model Services,’ click Add, enter the provider name (custom, anything), and the model provider supports both OpenAI and Gemini.

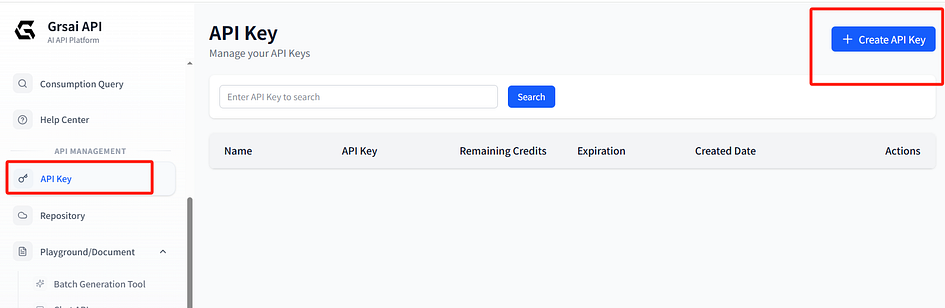

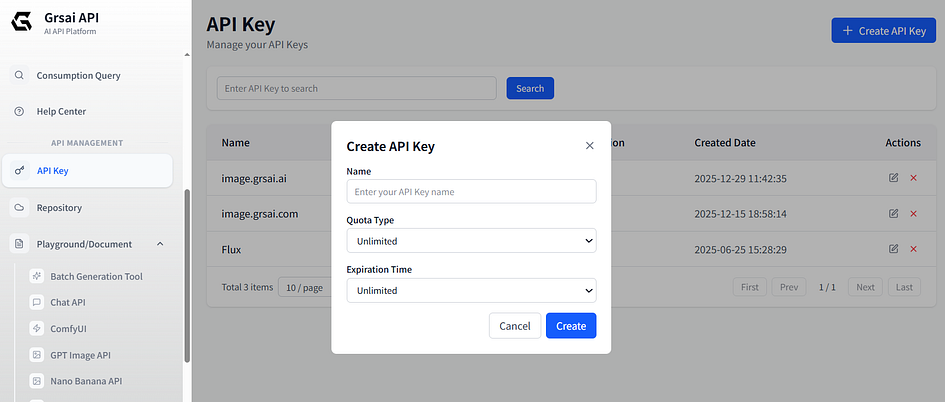

3.Fill in provider information: Create ApiKey in Grsai console (https://grsai.com/dashboard/api-keys com domain requires VPN, change to ai), copy and paste.

In Cherry Studio, paste ApiKey (keep confidential, do not share) and API address (base url/API Host): https://grsaiapi.com

After filling in the Api key and address, manually add models by entering the model names supported in Grsai backend:

Image generation models:

- nano-banana-fast

- nano-banana-pro

Chat models:

- gemini-3-pro

- gemini-3.1-pro

- gemini-2.5-pro

After setup, return to the assistant page, select the model to use. After configuration, you can use Gemini 3.1 Pro in Cherry Studio. If you have bulk image generation needs, Cherry Studio is not suitable as it requires waiting for the conversation to end before continuing to ask questions — you can use Grsai’s open-source free bulk image generation tool.

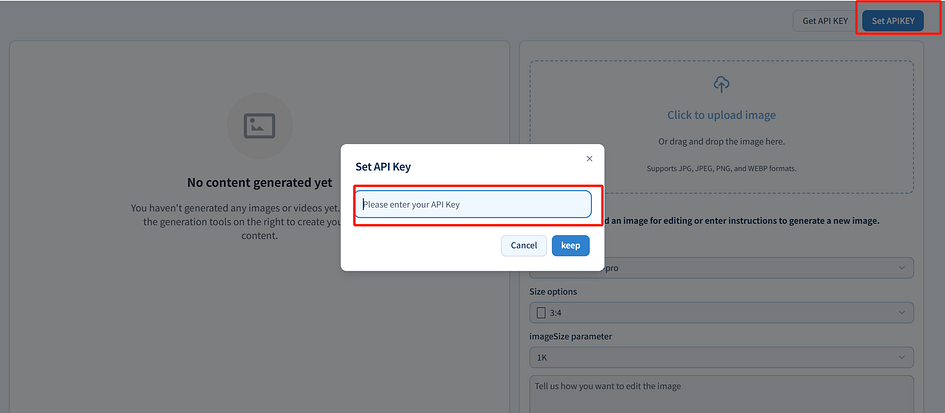

1.Obtain APIKey: Grsai console settings ApiKey (https://grsai.com/zh/dashboard/api-keys com domain requires VPN, change to ai), copy ApiKey

2.Configure API: Open the bulk image generation tool (https://image.grsai.com/ com domain requires VPN, change to ai), click settings in the upper right corner, paste your API Key

3.Generate: Upload image, select model, enter size and prompt to use

VI. Summary

This upgrade of Gemini 3.1 Pro mainly doubles reasoning ability, reduces costs, and lowers hallucinations. Whether you are a developer wanting to call the API or an ordinary user wanting to use 3.1 Pro through Cherry Studio or other third-party tools, feel free to follow my guide step by step.”

评论

发表评论